Hasty impressions: Continuous testing using AutoTest.NET

Rinat Abdullin recently posted about Mighty Moose and AutoTest.NET, two projects for continuous testing in the .NET/Mono space. My interest was immediately piqued, as I'm a huge fan of continuous testing. I've been using py.test to run my Python unit tests for years now, almost solely because it offers this feature.

I'm taking a look at AutoTest.Net first. Mostly because it's free. If I'm going to use something at home, it won't be for-pay, and the Day Job has been notoriously slow at shelling out for developer tools.

Update: there was a bug that had been fixed on trunk, but not in the installer that I used. AutoTest.Net is better at detecting broken builds than I report below.

Setting up AutoTest.NET

Download and installation were straightforward. I opted to use the Windows installer package, AutoTest.Net-v1.0.1beta (Windows Installer).zip. I just unzipped, ran the MSI, let it install both VS 2008 and VS 2010 Add-Ins (the other components are required, it seems), and that was that.Then I cracked open the configuration file (at c:\Program Files\AutoTest.Net\AutoTest.config). I just changed two entries:

BuildExecutable, andNUnitTestRunner

That's it. Well, for the basic setup.

Running the WinForms monitor

I opened a command prompt to the root of a small project and ran the WinForms monitor, telling it to look for changes in the current directory.

& 'C:\Program Files\AutoTest.Net\AutoTest.WinForms.exe' .The application started, presenting me with a rather frightening window

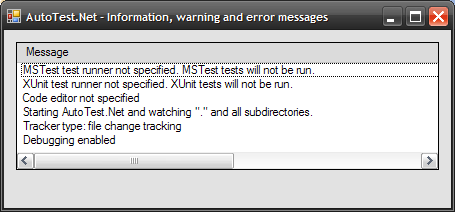

I mean, it makes sense. I have neither built nor run yet, so what did I expect? Still, I was taken aback by the plainness of it. Only temporarily daunted, I then hit the tiny unlabelled button in the northeast corner and got a new window. This was less scary.

Everything seemed to be in order. I hadn't specified MS Test or XUnit runners, nor a code editor. It says it's watching my files. So let's test it.

Mucking with the source

It's supposed to watch my source changes and Do The Right Thing. Let's see about that.A benign modification to one test file

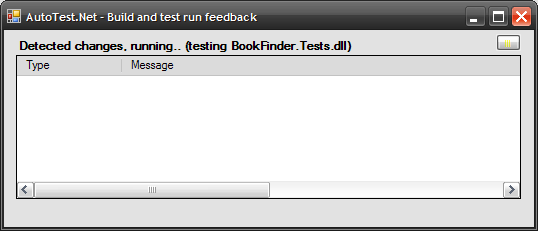

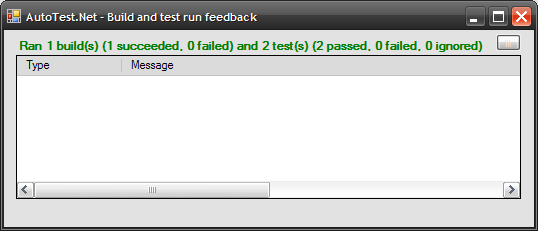

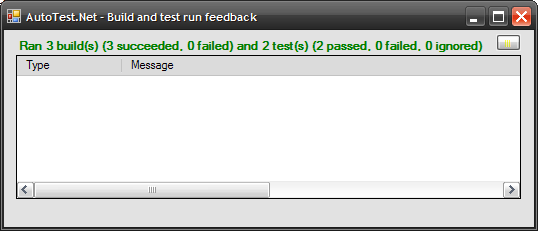

I changed the text in one of my test files. No functionality was changed - it was purely cosmetic. AutoTest.Net noticed, rebuilt the solution, and ran the tests! Pretty slick. Things moved quickly, but here's what I saw from the application:A benign modification to one "core" file

Next I changed the text in one of the core files - this file is part of a project that's referenced by the BookFinder GUI project, and the test project. Again, this was a cosmetic change only, just to see what AutoTest.NET would do. It did what it should - built the three projects and ran the tests. See?A core change that breaks a test

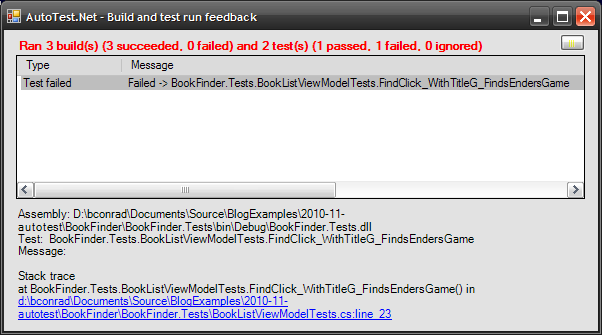

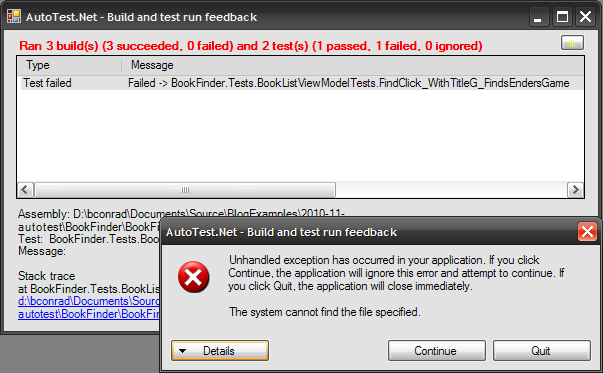

So, now I'll modify the core code in a way that breaks a test. It picks up the change, builds, tests, and does a really nice job of showing me the failure. I see the test that failed, and when I click it, am presented with the stack trace, including hyperlink to the source.Unfortunately, clicking the hyperlink didn't go so well:

That was a little disappointing. On the brighter side, hitting "Continue" did continue, with no seeming ill-effects.

Redemption

Confession time. I hadn't checked theCodeEditor section of the configuration file. As it turns out, it had a slightly different path to my devenv than the correct one. I fixed up the path and tried again. This time, clicking on the hyperlink opened devenv at the right spot.

So the problems was ultimately my fault, but I can't help but wish for more graceful behaviour - how about a "I couldn't find your editor" dialogue? Ah, well. The product's young. Polish will no doubt come.

I repaired the code that broke the tests, and AutoTest.Net was happy again after rebuilding and rerunning the tests.

Syntax Error

For my last test, I decided to actually break the compile. This was kind of disappointing. It claimed to run the 3 builds and the tests, and said that everything passed. I'm not sure why this would be - I was really hoping for an indication that the compilation failed, but nope. Everything was rainbows and puppies. Spurious rainbows and puppies.The VS Add-In

There's an add-in. You can activate it under the "Tools" menu. It looks and behaves like the WinForms app.The Console Monitor

I am used to running py.test in the console, so I thought I'd check out AutoTest's console monitor next. I started it up, made a benign change, and then made a test-breaking change. Here's what I saw:[Info] 'Default' Starting up AutoTester [Info] 'AutoTest.Console.ConsoleApplication' Starting AutoTest.Net and watching "." and all subdirectories. [Warn] 'AutoTest.Console.ConsoleApplication' XUnit test runner not specified. XUnit tests will not be run. [Info] 'AutoTest.Console.ConsoleApplication' Tracker type: file change tracking [Warn] 'AutoTest.Console.ConsoleApplication' MSTest test runner not specified. MSTest tests will not be run. [Info] 'AutoTest.Console.ConsoleApplication' [Info] 'AutoTest.Console.ConsoleApplication' Preparing build(s) and test run(s) [Info] 'AutoTest.Console.ConsoleApplication' Ran 3 build(s) (3 succeeded, 0 failed) and 2 test(s) (2 passed, 0 failed, 0 ignored) [Info] 'AutoTest.Console.ConsoleApplication' [Info] 'AutoTest.Console.ConsoleApplication' Preparing build(s) and test run(s) [Info] 'AutoTest.Console.ConsoleApplication' Ran 3 build(s) (3 succeeded, 0 failed) and 2 test(s) (1 passed, 1 failed, 0 ignored) [Info] 'AutoTest.Console.ConsoleApplication' Test(s) failed for assembly BookFinder.Tests.dll [Info] 'AutoTest.Console.ConsoleApplication' Failed -> BookFinder.Tests.BookListViewModelTests.FindClick_WithTitleG_FindsEndersGame: [Info] 'AutoTest.Console.ConsoleApplication'

Not bad, but I have no stack trace for the failed test. Just the name. I'm a little sad to lose functionality relative the WinForms runner. I know I wouldn't be able to click on source code lines, but still.

Gravy - Hooking up Growl

Undeterred by the disappointing performance in the Syntax Error test, I soldiered on. I use Growl for Windows for notifications, and I was keen to see the integration. I went back to the configuration file and input thegrowlnotify path. While I was there, I set notify_on_run_started to false (after all, I know when I hit "save"), and notify_on_run_completed to true. Then I fixed my compile error and saved the file.

In addition to the usual changes to the output window, I saw some happy toast:

Honestly, with a GUI or text-based component around, I'm not sure how much benefit this will be, but I guess I can minimize the main window and so long as tests keep passing, I can get some feedback. Still it's kind of fun.

Impressions

I really like the idea of this tool. I love the idea of watching my code and continuously running the tests. The first steps are very good - I like the clickonable line numbers to locate my errors, and I think the Growl support is cute, but probably more of a toy than an actual useful feature.Will I Use It?

Not now, and probably never at the Day Job. The inability to detect broken builds is pretty disappointing. Also, at work, I have ReSharper to integrate my unit tests. I've bound "rerun the previous test set" to a key sequence, so it's just as easy for me to trigger as it is to save a file.At home? Maybe. If AutoTest.Net starts noticing when builds fail, then I probably will use it when I'm away from ReSharper and working in .NET.